August 2025 project update

Closing out August 2025, we are proud to announce our third release of the year. This release follows an intense development period where the team has reevaluated its priorities / timelines and refocused efforts on “delivering core Linux distribution tooling that will simplify our ability to scale out over time”.

We have documented some of our progress in our last two blog posts and spent the last two months further progressing towards these goals. We have implemented a basic version of virtual packages (Package Sets), continued our hardware (and VM) enablement efforts and have selectively been growing our repository where we feel it’s beneficial to our users.

What’s new Distro-wise

Section titled “What’s new Distro-wise”Whilst not an exhaustive list, some of the top line repository updates include:

- GNOME 48.4

- Plasma 6.4.4

- Sway 1.11

- Cosmic Alpha 7

- Linux 6.15.11

- Mesa 25.2.1

- LLVM 20.1.8

- uutils-coreutils 0.1.0

- sudo-rs 0.2.8

- ffmpeg 7.1.1

- fastfetch 2.51.1 (adds AerynOS logo)

- Waydroid: Add at 1.5.4

- openvpn: Add at 2.6.14

- protontricks: Add at 1.13.0

- winetricks: Add at 20250102

In addition, we fixed a subtle issue with our PATH configuration that mostly affected our console logins. With this fix, we have made our login experience fully stateless. We have also enabled sulogin for a single-user root shell to diagnose and repair boot failures.

Package sets

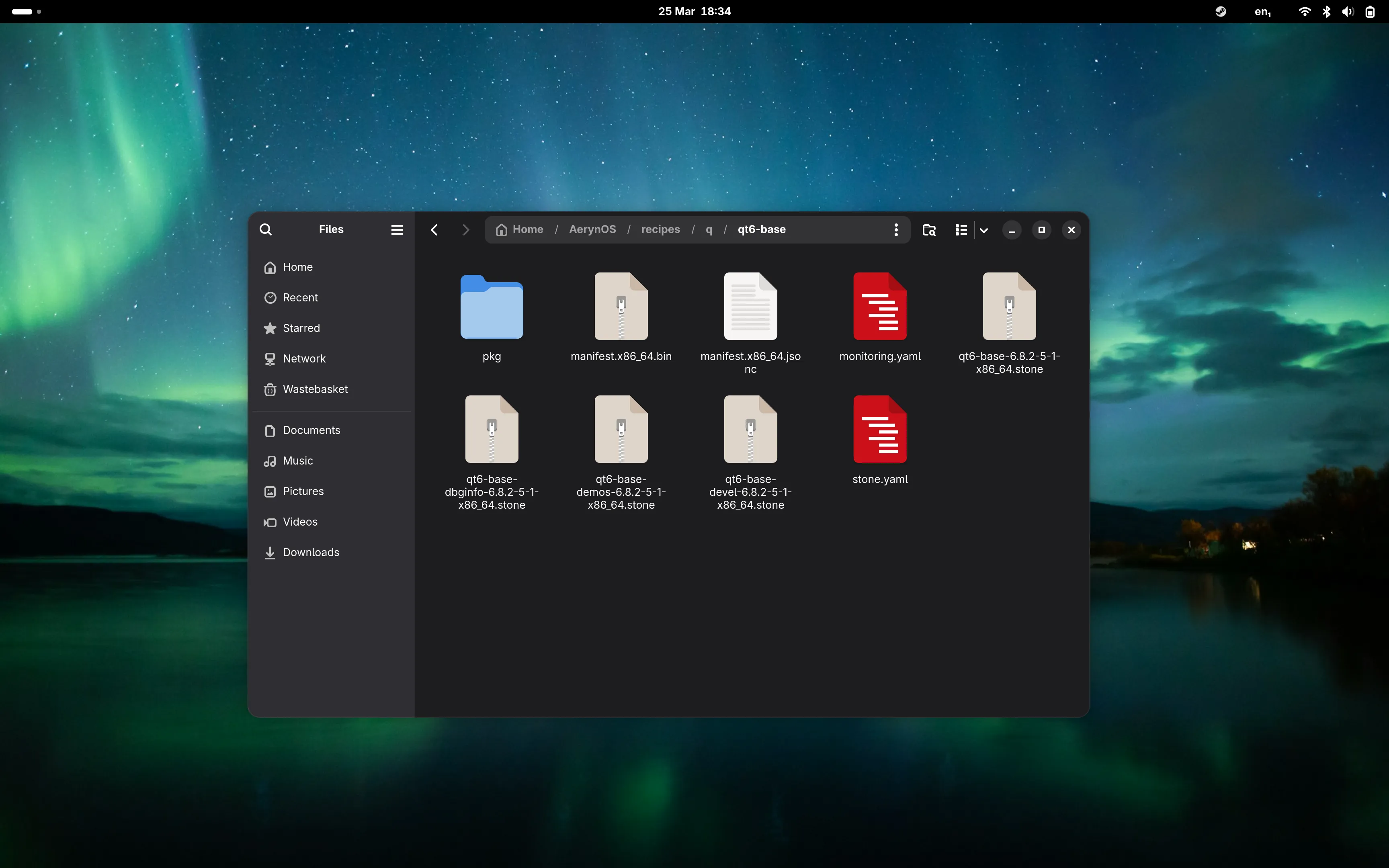

Section titled “Package sets”AerynOS is transitioning to a package set model for core packages installed on a user’s system.

Package sets are a collection of packages that are related or used together for a specific purpose. In AerynOS, they are used for consolidating our base system packages and for each of our offered Desktop Environments / Window Managers.

Each Desktop Environment offered by AerynOS has an associated package set (usually “recommended”). Depending on the environment, we may optionally offer a “minimal” and/or “full” solution with less or more packages to better suit our users requirements.

The package set model we have implemented is a stepping stone technology, not the final solution we are looking to implement. It introduces the basic premise of virtual sets of packages and is a precursor to our “system-model” work that will allow for exact reproduction of a user’s installed system.

Our installation experience with lichen

Section titled “Our installation experience with lichen”To fully integrate the new package set model within the AerynOS project, we have adapted lichen to install Desktop Environments based on the associated package set. The TUI (Text User Interface) prompts guide a user to select which DE they want, and depending on the DE, the version we have curated for the install (recommended for the DE’s, minimal for sway) with moss determining what dependencies are required for a successful install based on that package set.

Lichen is a network installer meaning that it downloads the latest set of packages from the AerynOS repository for installation. Tweaks to our package sets don’t necessarily require a new ISO. Whilst the “live environment” may not be up to date, the user will get a fully up-to-date installation without requiring a post-install update step.

lichen usability updates

Section titled “lichen usability updates”In its current state, lichen requires that a user pre-format their disk prior to attempting to install AerynOS. Given its nature of being a network installer, lichen also needs an active internet connection to complete an install. Before, if either of these prerequisites were not met, the installer would output a very unhelpful Rust code error. We have added the appropriate prompts to guide users to ensure they have an active internet connection and to remind them that they need to pre-format their disk.

Whilst we could fix the pre-format requirement, a conscious decision has been made to keep this “anti-usability” feature as a barrier to entry for “beginner Linux users”. This may seem very counter intuitive, however whilst we are in an alpha state, we need to be careful not to position ourselves as a “beginner Linux distro” that could attract many support requests. We need to focus our time on developing our tooling and infrastructure.

Do note that in time, we will fix this issue and become more beginner friendly!

Virtual Machine usage and hardware enablement

Section titled “Virtual Machine usage and hardware enablement”Significant work has taken place to enable virtual machine support, both with AerynOS as the host and as the guest.

For host support, we have packaged virt-manager into our repository. The team utilised VMs for testing package set configurations and other potential breaking changes over the last month. It has sped up our development pace as VMs are more disposable by their nature.

For guest support, we have enabled a significant amount of hardware in our kernel and specifically enabled HyperV (based on user requests). We are also actively seeking user feedback for other VM environments. If you try AerynOS in other VM solutions and have any problems, you can report those issues here.

By better supporting both VM host and guest scenarios, we hope to unblock potential contributors from exploring our distribution and tooling.

Please note that we still classify AerynOS as alpha status project. We do not recommend anyone install it on hardware required for “production environments”! Our key goal is to enable the hardware / software that developers and contributors may need to make the transition to and/or explore AerynOS.

Other updates

Section titled “Other updates”Reilly Brogan has added scx-scheds to our repository and set scx_flash as our default scheduler. Scx_flash is a scheduler that is focused on ensuring fairness among tasks and performance predictability. More details about it can be found on the sched-ext website.

For our use case, this is helpful as it allows an AerynOS system to still be responsive whilst heavy tasks such as building packages are happening in the background.

Whilst this has been implemented in our ISO (ie. for new installs), it will not retroactively apply for existing installs. If you want to transition to this, you will need to install scx-scheds.

What’s new DE-wise

Section titled “What’s new DE-wise”There has been considerable effort around our DE provision this year, some of which is yet to materialise. We are happy to report that we are now offering KDE Plasma in our repository and it will be installable from our ISOs going forwards.

In addition, we have created a console only installation option for more advanced users and for our own testing purposes.

KDE Plasma

Section titled “KDE Plasma”It’s fair to say that KDE Plasma has been one of the biggest requests we have received and we are happy to report that it is now available in our repository. Reilly Brogan has done a fantastic job packaging up the latest 6.4.4 version into our repository with both sddm and plasma-login-manager offered as login managers.

A running bug tracker can be found here to report any issues. Please do test it out and help us find and resolve any undocumented bugs that remain.

We are far enough in our bring up and testing process for KDE Plasma that we are comfortable offering it as an installation option in our ISO’s going forwards.

Cosmic

Section titled “Cosmic”Within the AerynOS repository, Cosmic DE sits at the release tag of Alpha 7. Given the significant pace of development of Cosmic, we are looking to move to a more frequent update cycle to incorporate bug fixes and new feature releases tracking System76’s repo-release repository. Whilst still in flux, we are looking at a bi-weekly update frequency to balance maintainer burden with keeping the DE up to date. The first of these updates bring Cosmic DE packages to their most recent versions will land in the AerynOS repository in the coming days.

Following recent engagement efforts, we have been seeing new users and contributors checking out AerynOS and specifically our Cosmic spin. Through this additional testing, we are seeing more active engagement on keeping Cosmic updated and bugs squashed. Given its alpha status, we don’t expect a fully bug free user experience so please bear this in mind if choosing Cosmic. We are classifying our Cosmic spin as a “Technical Preview” given that both Cosmic and AerynOS are currently in alpha status.

Moving forward, we are looking to package up more of the available Cosmic applets and generally polish the Cosmic experience on AerynOS. If you have an interest in packaging and specifically in the Cosmic Desktop, feel free to get in touch as we could always use more support in testing and improving upon our DE experience.

We have had Sway within our repository since early last year but did not really highlight its inclusion. Sway has been updated to v1.11 and we have also included Waybar and a few other packages to make ricing Sway a nicer experience on AerynOS.

We debated including Sway as an installable option from our ISO, however we have made the decision to defer this for a future release. We have created an initial “minimal” package set for Sway which includes the bare minimum to get started on ricing it. However, it has not yet been validated to a level that we are comfortable shipping it to users, even in our alpha state.

It remains in our repository and will continue being worked on as we progress into the second half of the year. In time, we would also like to develop a couple of pre-configured Sway configs as additional package sets so users not already familiar with Sway can jump right in without having as much background experience.

Console-only

Section titled “Console-only”In addition to the other environments, we have created a very minimal package set that will boot into the Linux console without any Desktop Environment. Users can use this console-only option as a starting point to configure a system install exactly to their requirements with only the packages they wish to have included or as the basis for a new DE/WM to be included within AerynOS.

Given the way we have layered DE/WM package sets over our base package sets, a user is able to install any of the other DE/WM options on top of this console only solution. This has been very helpful for the AerynOS team in testing our different offerings.

Other than updating to the latest 48.4 version, there is not else much to say on GNOME. To its credit, it has been working smoothly on AerynOS so there has not been any major work required.

It remains our default option for our ISO live environment and should only require updating to new versions as they release. If you do happen to discover an “undocumented feature”, please feel free to report it here.

What’s new tooling-wise

Section titled “What’s new tooling-wise”Moss state diff

Section titled “Moss state diff”Joey Riches has delivered a new command “moss state diff” which allows users to check the differences between two states. This is very useful when you want to revert back to an older state.

Each state is identified as a number and there was no way to understand what a state had. With this new command, you will be able to inspect the state and see if it’s the right state that you are looking for.

The command requires you provide two state numbers and it will return back the differences in package versions and new / removed packages between the two states you have specified.

Moss search-file

Section titled “Moss search-file”We have also landed another new moss command “moss search-file”. This works similar to “moss search” however it works at the file level and enables users to ask moss to which package any given installed file under /usr belongs.

What we are working on

Section titled “What we are working on”Packagekit and appstream generator work

Section titled “Packagekit and appstream generator work”Joey Riches has picked up and continued his previous packagekit integration for moss to integrate into our various DE software centres (GNOME Software, KDE Discover and Cosmic Store).

Until now, users could only install AerynOS .stone packages through the terminal. This integration is a significant usability upgrade though we still recommend our users have familiarity of how to interact with moss via command line.

Alongside packagekit, we now also have appstream meta data hosted on our dotdev site. Work is on-going with both packagekit and appstream but the groundwork is completed. We can build from this point towards fully developed software centre integration.

Once this lands in our repository, we will take another step towards making AerynOS a more user friendly distribution.

Documentation improvements

Section titled “Documentation improvements”Outside of the code development, there is a renewed focus on our documentation site. This is a continuing and incremental exercise with improvements coming across the board.

Over the last few months, we have improved the FAQ page, added more information on how to update packages on an AerynOS system and added additional information around the Desktop Environments we offer. We have also added specific background detail about how AerynOS is different to other distributions on our Philosophy page.

We will continue fleshing out our documentation in the coming months, with a specific focus on how to contribute, both to the project itself and how to create packages and submit them for inclusion in our repository.

Some of the feedback we have received is that documentation is fragmented and/or not yet created. This is a frustration we can remove through our documentation efforts and we have new contributors helping out in this aspect too.

We are always looking for more support so if you have any interest in getting involved with our documentation efforts, please feel free to reach out on Matrix and specifically engage with NomadicCore.

Next steps

Section titled “Next steps”Our focus for the second half of the year remains similar to what we have detailed in our previous two blog posts.

We are working towards versioned repositories which will allow the team to deliver new features to our os-tooling (moss and boulder) in a seamless fashion. Versioned repositories are a prerequisite and gateway to future features that we will deliver in AerynOS hence its prioritisation.

For the os-tooling, we are adding structured logging for better insight and reporting, improving error handling and ensuring we deliver more helpful message output and looking towards adding JSON output for all of this for nicer parsing of “structured output” across process barriers. We continue to add low hanging fruit features

Download AerynOS

Section titled “Download AerynOS”The link for our latest iso can be found at our download page.